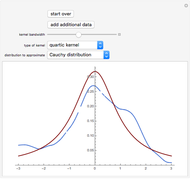

Each point is represented as the center for a kernel density. Furthermore, the dynamic specification may be checked using the residuals given by the predictive cumulative distribution function. Kernel Density Estimation is smoothening the data by convolving each point x with some kernel. Hence dynamic parameters may be estimated by maximum likelihood. Kernel density estimation has two difficulties: Optimal bandwidth estimation. The goal of density estimation is to estimate underlying. Not only can updating be carried out recursively, but a likelihood function can be constructed from the predictive distributions, as in an observation-driven model. Kernel density estimation is a nonparametric technique to estimate density of scatter points. However, if the distribution is thought to change over time, observations may be weighted by adapting the filters described in the preceding chapters. #KERNEL DENSITY ESTIMATION SERIES#There is an enormous literature on this topic, but relatively little has been written on applying kernel estimation to time series see, for example, Markovich (2007). Kernel density estimates ( KDE ) are closely related to histograms but can be endowed with properties such as smoothness or continuity by using a suitable kernel. When observations are independent, a probability density function, or the corresponding cumulative distribution function, may be estimated nonparametrically by using a kernel. In this tutorial, we’ll carry on the problem of probability density function inference, but using another method: Kernel density estimation. However, if a parametric model is felt to be too restrictive, the question arises regarding whether a distribution-free filter is possible. In statistics, kernel density estimation ( KDE) is the application of kernel smoothing for probability density estimation, i.e., a non-parametric method to estimate the probability density function of a random variable based on kernels as weights. Each conflict event observed on the ground is interpreted as a random realization of this process and its underlying distribution is estimated using kernel. #KERNEL DENSITY ESTIMATION FULL#Letting the score drive the dynamics reflects the full conditional distribution, and this was the approach adopted for the models described in Chapters 3, 4 and 5. An introduction to Kernel Density Estimations, explanations to all methods implemented in zfit and a throughout comparison of the performance can be found in Performance of univariate kernel density estimation methods in TensorFlow by Marc Steiner from which many parts here are taken. It can be calculated for both point and line features. This differs from the results for nonparametric estimation of densities and regression functions for monadic data, which generally have a slower rate of convergence than their corresponding sample mean.A GARCH filter weights squared observations to produce a measure of variance, but variance is of limited utility when the conditional distribution is not Gaussian. The Kernel Density tool calculates the density of features in a neighborhood around those features. Specifically, we show that they converge at the same rate as the (unconditional) dyadic sample mean: the square root of the number, N, of nodes. Kernel Density Estimations are nice visualisations, but their use can also be taken one step further. More unusual are the rates of convergence and asymptotic (normal) distributions of our dyadic density estimates. Kernel Density Estimation (KDE) is a useful analysis and visualisation tool that is often the end product of a visualisation or analysis workflow. We suggest an estimate of their asymptotic variances inspired by a combination of (i) Newey’s (1994) method of variance estimation for kernel estimators in the “monadic” setting and (ii) a variance estimator for the (estimated) density of a simple network first suggested by Holland and Leinhardt (1976). In this setting, we show that density functions may be estimated by an application of the kernel estimation method of Rosenblatt (1956) and Parzen (1962). These random variables satisfy a local dependence property: any random variables in the network that share one or two indices may be dependent, while those sharing no indices in common are independent. Finding the optimal parameters of the kernel density estimator is therefore extremely important in order to obtain a good estimate. We study nonparametric estimation of density functions for undirected dyadic random variables (i.e., random variables defined for all n ≡ d e f N 2 unordered pairs of agents/nodes in a weighted network of order N).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed